Pascal Pernot (2017)

Highlighted by

Jonny Proppe

In a recent study on uncertainty quantification, Pernot(1) discussed the effect of model inadequacy on predictions of physical properties. Model inadequacy is a ubiquituous feature of physical models due to various approximations employed in their construction. Along with data inconsistency (e.g., due to incorrect quantification of measurement uncertainty) and parameter uncertainty, model inadequacy only acquires meaning by comparison against reference data. For instance, a model is inadequate if it cannot reproduce reference data within their uncertainty range (cf. Figure 1), given all other sources of error are negligible. While parameter uncertainty is inversely proportional to the size of the reference set, systematic errors based on data inconsistency and model inadequacy remain without explicit identification and elimination.

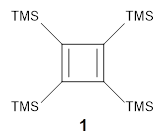

Figure 1. Illustration of model inadequacy. (a) Reference versus calculated (CCD/6-31G*) harmonic vibrational frequencies reveal a linear trend in the data (red line), which is not the unit line. In this diagram, the uncertainty of the reference data is too small to be visible. (b) Residuals of temperature-dependent viscosity predictions based on a Chapman–Enskog model reveal an oscillating trend, even if the 2 confidence intervals of the reference data are considered. Reproduced from

J. Chem. Phys. 147, 104102 (2017), with the permission of AIP Publishing.

In related work, Pernot and Cailliez(2) demonstrated the benefits and drawbacks of several Bayesian calibration algorithms (e.g., Gaussian process regression, hierarchical optimization) in tackling these issues. These algorithms approach model inadequacy either through a posteriori model corrections or by parameter uncertainty inflation (PUI). While a posteriori corrected models cannot be transferred to observables not included in the reference set, PUI ensures that the corresponding covariance matrices are transferable to any model comprising the same parameters. However, the resulting predictions may not reflect the correct dependence on the input variable(s), which is determined by the sensitivity coefficients of the model (the partial derivatives of a model prediction at a certain point in input space with respect to the model parameters at their expected values). Pernot referred to this issue as the “PUI fallacy”1 and illustrated it at three examples: (i) linear scaling of harmonic vibrational frequencies, (ii) calibration of the mBEEF density functional against heats of formation, and (iii) inference of Lennard–Jones parameters for predicting temperature-dependent viscosities based on a Chapman–Enskog model (cf. Figure 2). In these cases, PUI resulted in correct average prediction uncertainties, but uncertainties of individual predictions were systematically under- or overestimated.

Figure 2. Illustration of the PUI fallacy for different algorithms (VarInf_Rb, Margin, ABC) at the example of temperature-dependent viscosity predictions based on a Chapman–Enskog model. In all cases, the centered prediction bands (gray) cannot reproduce the oscillating trend in the residuals. Reproduced from J. Chem. Phys. 147, 104102 (2017), with the permission of AIP Publishing

Pernot’s paper presents a state-of-the-art study for rigorous uncertainty quantification of model predictions in the physical sciences, which only recently started to gain momentum in the computational chemistry community. His study can be seen as an incentive for future benchmark studies to rigorously assess existing and novel models. Noteworthy, Pernot has made available the entire code employed in his study (

https://github.com/ppernot/PUIF).

(1) Pernot, P. The Parameter Uncertainty Inflation Fallacy. J. Chem. Phys. 2017, 147 (10), 104102.

(2) Pernot, P.; Cailliez, F. A Critical Review of Statistical Calibration/Prediction Models Handling Data Inconsistency and Model Inadequacy. AIChE J. 2017, 63 (10), 4642–4665.