Andrew F. Zahrt, Yiming Mo, Kakasaheb Y. Nandiwale, Ron Shprints, Esther Heid, and Klavs F. Jensen (2022)

Highlighted by Jan Jensen

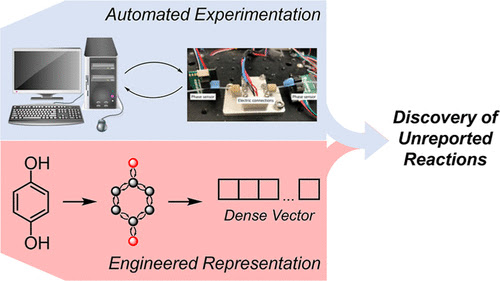

Derek Lowe has highlighted the chemical aspects of this work already, so here I focus on the machine learning, which is pretty interesting. The authors want to predict whether a molecule will react with 4-dicyanobenzene anion after it is oxized at a cathode. They have 141 data points of which 42% show a reaction.

They tested several classification models using Morgan fingerprints as the molecular representation, but got at accuracy of only 60%. The then reasoned that the accuracy could be improved by using DFT features. However, rather than using molecular features they decided to use atomic features from an NBO analysis on the radical cation, neutral, radical anion. The feature vector was then tested on several data sets and shown to perform well.

The question is then how to combine the atomic feature vectors to a molecular representation for the reaction classification. The usual way is graph convolution but that'll require more than 141 data points to optimise. So instead they use graph2vec, which is an unsupervised learning method so it is easy to create arbitrarily large training sets. Graph2vec is analogous to word2vec (or, more accurately, doc2vec) which creates vector representations of words by predicting context in text (i.e. words that often appear close to the word of interest). For graph2vec the context is subgraphs of the input graph.

The graph2vec embedder was then trained on 38k molecules (note that this requires 38k DFT calculations). Using this representation, the accuracy for the reaction classifier increased to 74%, which is a significant improvement compared to Morgan fingerprints. The classifier was then applied to the 38k molecules and 824 were predicted to be reactive. Twenty of these were selected for experimental validation and 16 (80%) were shown to be reactive. That's not a bad hit rate!

I was not aware of graph2vec before reading this paper and it seems like a very promising alternative to graph convolution, especially in the low data regime.

This work is licensed under a Creative Commons Attribution 4.0 International License.