Contributed by Jan Jensen

This paper describes the first electronic structure calculation, the PES of H$_2$, performed on a quantum computer without "exponentially costly precompilation". I know very little of quantum computing so bear with me as I try to explain this paper to myself in terms even I can begin to understand.

From Wikipedia

A classical computer has a memory made up of bits, where each bit is represented by either a one or a zero. A quantum computer maintains a sequence of qubits. A single qubit can represent a one, a zero, or any quantum superposition of those two qubit states; a pair of qubits can be in any quantum superposition of 4 states, and three qubits in any superposition of 8 states. In general, a quantum computer with $n$ qubits can be in an arbitrary superposition of up to 2$^n$ different states simultaneously (this compares to a normal computer that can only be in one of these 2$^n$ states at any one time). A quantum computer operates by setting the qubits in a controlled initial state that represents the problem at hand and by manipulating those qubits with a fixed sequence of quantum logic gates. The sequence of gates to be applied is called a quantum algorithm. The calculation ends with a measurement, collapsing the system of qubits into one of the 2$^n$ pure states, where each qubit is zero or one, decomposing into a classical state. The outcome can therefore be at most $n$ classical bits of information. Quantum algorithms are often non-deterministic, in that they provide the correct solution only with a certain known probability.The paper describes two experiments and I'll focus on the first one. The experiment uses two Xmon transmon qubits, which is a kind of superconducting charge qubit where the two qubit states are uncharged and charged superconducting islands (essentially circuits made of superconducting aluminum film deposited on a sapphire substrate).

The molecular Hamiltonian of H$_2$ within a minimal basis set is rewritten as

$$H = g_0 \textbf{1} + g_1Z_0 + g_2Z_1 + g_3Z_0Z_1 + g_4X_0X_1+g_5Y_0Y_1 $$

{$X_i,Y_i,Z_i$} is measured (quantum computed) and {$g_i$} are numbers that can be (classically) computed and that depend on the H-H bond length ($R$). Since there are only two orbitals the energy depends on one variational parameter ($\theta$) so the objective is to find the value of $\theta$ that minimizes the energy for a given value of $R$.

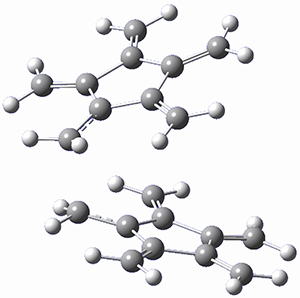

So the authors set up a quantum algorithm that outputs {$X_i,Y_i,Z_i$} given an initial state that depends on $\theta$. This is done for a thousands different values of $\theta$ and for each value of $R$ the value of $\theta$ that minimizes the energy defined by Eq 1 is found by classical minimization, to yield a PES (see Figure 2 from the paper reproduced below)

The "exponentially costly precompilation" mentioned above refers to the fact that the conventional quantum algorithm approach (QPE) (source):

requires a large number of n-qubit quantum controlled operations to be performed in series—placing considerable demands on the number of components and coherence time—while the inherent parallelism of our scheme enables a small number of n-qubit gates to be exploited many times, drastically reducing these demands.

This work is licensed under a Creative Commons Attribution 4.0